Hi all,

i and my friends are in a team of application developers, mainly developing json web services for web and mobile app.

previously, each of us has various language experiences : scala, perl, python

and we want to discuss the language for the next projects.

so i tried to make some benchmarks, as performance is one of the factor for the decision

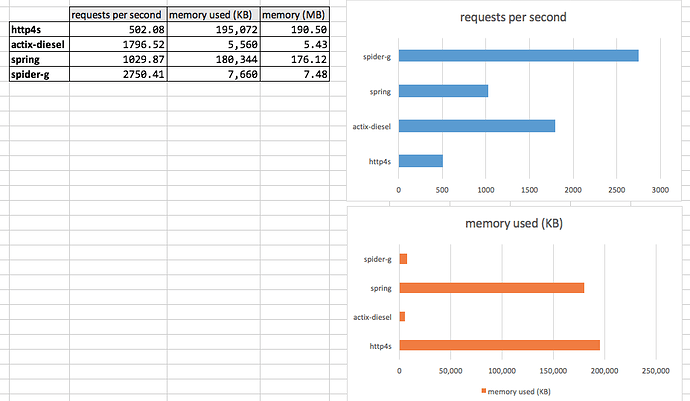

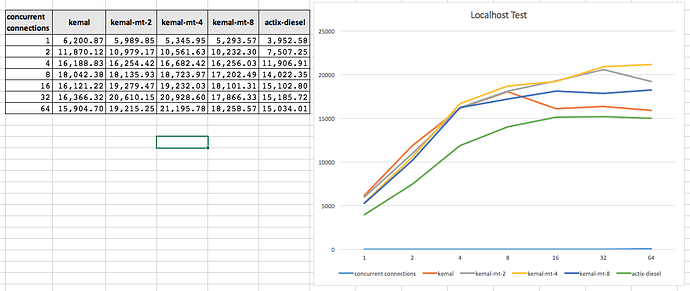

several frameworks are used in this : http4s (scala), spring(java), actix (rust), spider-gazelle (crystal).

i use ab with this command : $ ab -n 1000 -c 4 http://127.0.0.1:555XX/a-url-path

in this benchmark, the thing that is measured is how fast a web service can deliver a json response, as a result of querying list of records from postgresql database

that is a very common scenario for web based or mobile app : querying for records in database, and deliver those records in json format as http response :

[{"id":1,"code":"FFFFFF","name":"White"},{"id":2,"code":"C0C0C0","name":"Silver"},{"id":3,"code":"000000","name":"Black"},{"id":4,"code":"0000A0","name":"Dark Blue"},{"id":5,"code":"FF0000","name":"Red"}]

here are the results so far (request per seconds, and memory usage in KB/MB) :

the full source code is here :

i ran the benchmarks in a vps (1 cpu core) having this spec : model name : Intel® Xeon® Gold 6140 CPU @ 2.30GHz

i would like to have some suggestions :

- are there anything missing in actix (rust) based web service that i use (because the performance is lower than crystal)

a note : for crystal based web services, i dont use any ORM, because CRUD operation without ORM is very common

thanks everyone